Insights

Artificial Intelligence

Artificial intelligence is not a single risk. It is a force multiplier that can accelerate labor disruption, infrastructure instability, surveillance expansion, cyber escalation, misinformation, autonomous decision-making, and strategic weapons uncertainty. For families, estates, and continuity-minded planners, the question is no longer whether AI will reshape the operating environment, but how quickly critical systems can become less predictable.

Overview

AI changes the risk landscape

Artificial intelligence is often presented as a productivity story, a convenience story, or a technology story. In reality, it is also a preparedness story. Systems that can analyze, predict, imitate, optimize, and act at machine speed change the way institutions make decisions, the way markets move, the way public narratives form, and the way infrastructure is targeted or defended. When those systems are embedded into finance, logistics, utilities, communications, healthcare, transportation, and defense, the consequences of failure are no longer limited to software errors. They become social, economic, and physical.

For preparedness-minded households, the strategic concern is not simply a dramatic machine uprising. The more immediate issue is cumulative dependence. AI can degrade trust before it breaks systems. It can automate confusion before it automates violence. It can weaken employment stability, distort public understanding, intensify cyber campaigns, and centralize power long before a visible crisis arrives. This is why serious continuity planning must account for a future in which digital systems become more capable, less transparent, and more deeply integrated into everyday life.

Preparedness in the age of AI means planning for speed, opacity, dependency, and cascading consequences.

Bunker Construction Inc.

A secured luxury bunker is not a reaction to science fiction. It is a practical continuity asset for an era in which software-driven systems increasingly influence food distribution, payments, communications, mobility, public order, and strategic deterrence. The families who plan early gain time, options, and privacy while others remain exposed to systems they do not control.

Primary AI risk categories

Artificial intelligence creates overlapping risks across labor, infrastructure, information, security, governance, and military systems. The categories below are not isolated. They reinforce one another and can compound quickly during periods of stress.

Job loss

Automation can displace white-collar and technical roles as well as routine labor, reducing income stability and weakening household resilience.

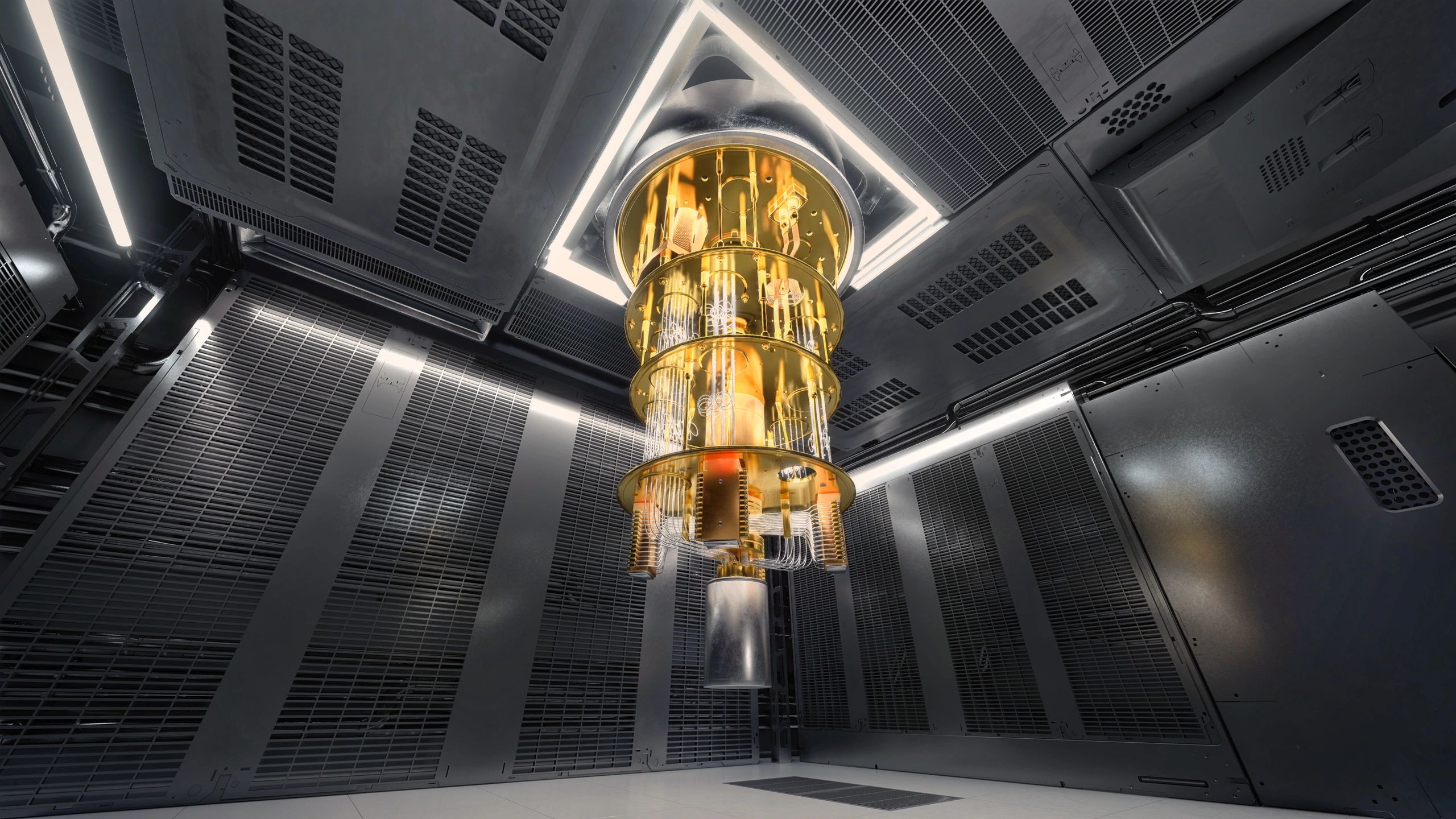

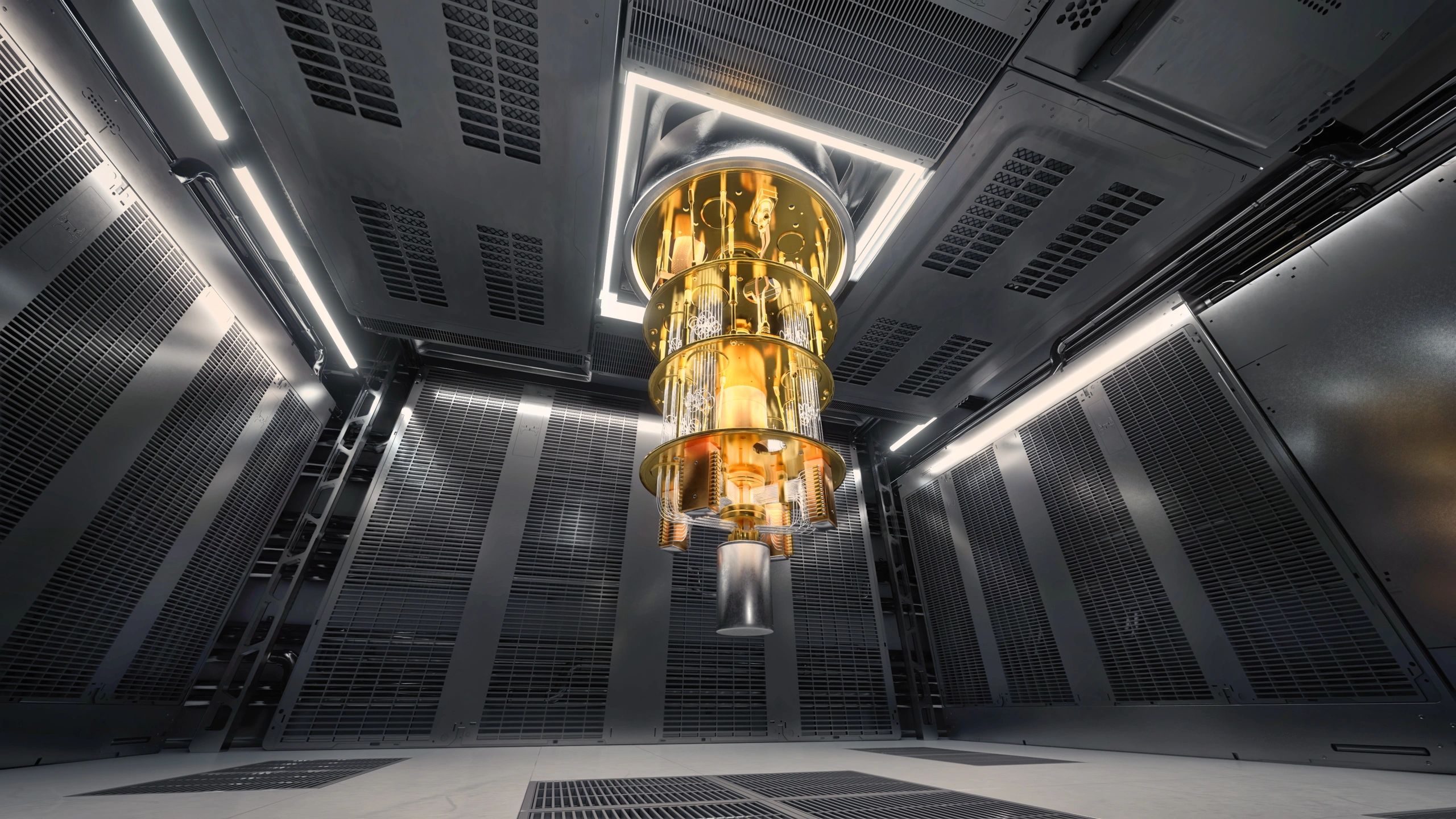

Infrastructure disruption

AI-managed grids, logistics, and industrial systems can fail at scale when models misread conditions or are manipulated.

Misinformation

Synthetic media and automated persuasion can flood the information environment faster than institutions can verify truth.

Surveillance

AI expands the ability to monitor movement, behavior, transactions, communications, and identity in real time.

Cyber risk

Attackers can use AI to accelerate reconnaissance, phishing, malware adaptation, and infrastructure targeting.

Economic instability

AI concentration can amplify inequality, market volatility, and dependence on a small number of firms and platforms.

Autonomous systems

Machines that sense and act with limited human oversight can create safety, liability, and escalation problems.

Weapons integration

AI in command, targeting, and battlefield systems raises the risk of faster conflict cycles and catastrophic error.

Where consequences become real

The most serious AI consequences emerge when powerful systems are embedded into ordinary life and critical decision chains. The following areas deserve close attention from anyone planning for long-term family continuity.

Labor

Job loss and social strain

AI-driven automation is likely to affect far more than repetitive factory work. Administrative roles, customer support, paralegal review, design production, software assistance, financial analysis, logistics planning, and portions of healthcare documentation are already being compressed by machine-generated output. As firms discover they can reduce headcount while maintaining acceptable performance, the pressure to automate spreads across industries. The result may not be universal unemployment, but it can still produce widespread insecurity, lower bargaining power, reduced middle-class stability, and a more fragile consumer economy. Families that rely on a narrow income stream may find that professional prestige no longer guarantees durable earning power.

Systems

Infrastructure and supply disruption

As AI is integrated into grid balancing, freight routing, warehouse automation, predictive maintenance, water management, and emergency dispatch, efficiency gains come with new fragility. A flawed model, corrupted data stream, malicious prompt chain, or adversarial cyber intrusion can trigger errors that propagate across networks at machine speed. Small failures can become regional disruptions when operators trust automated recommendations too quickly or lack manual fallback capacity. The more optimized a system becomes, the less slack it may retain. In preparedness terms, that means food delivery, fuel access, communications, and utility reliability can all become more vulnerable to synchronized disruption.

Truth

Misinformation and reality erosion

AI can now generate persuasive text, cloned voices, fabricated video, synthetic experts, fake documents, and coordinated influence campaigns at enormous scale. This does not merely create false stories. It degrades the public ability to know what is real. In a crisis, that matters profoundly. False evacuation orders, fake bank notices, forged executive statements, manipulated military footage, and synthetic emergency guidance can trigger panic, delay response, or redirect public behavior. Once trust is weakened, even authentic warnings may be ignored. A population that cannot distinguish signal from noise becomes easier to manipulate and slower to coordinate.

Security

Surveillance, cyber conflict, and economic concentration

Beyond labor and information risk, AI also changes the balance of power between individuals, institutions, and states. It expands visibility, compresses response time, and concentrates strategic advantage in the hands of those who control data, compute, and deployment pipelines.

Surveillance expansion

AI-enhanced surveillance can combine facial recognition, gait analysis, license plate tracking, transaction monitoring, device metadata, and behavioral prediction into a persistent map of daily life. Even where individual tools are imperfect, the aggregate effect is powerful. Privacy becomes conditional rather than assumed. For high-net-worth families, public figures, and continuity-minded households, this raises concerns about targeting, profiling, coercion, and loss of discretion. Physical security planning increasingly intersects with digital invisibility and data minimization.

Cyber risk at machine speed

AI does not need to invent entirely new forms of cyberattack to be dangerous. It only needs to make existing attacks faster, cheaper, and more adaptive. Automated phishing can become more convincing. Malware can be refined more quickly. Attackers can scan for vulnerabilities, tailor social engineering, and test pathways into infrastructure with greater speed than many defenders can match. This increases the chance of attacks on utilities, hospitals, banks, telecom networks, and transport systems, especially during geopolitical tension.

Economic instability and concentration

AI advantage tends to accumulate around firms and governments with access to large datasets, elite talent, specialized chips, and massive capital. That concentration can deepen inequality, reduce competition, and create chokepoints in the global economy. If a small number of platforms mediate search, commerce, communication, productivity, and decision support, then outages, policy shifts, or political pressure on those platforms can ripple outward quickly. Economic resilience weakens when too much capability is centralized.

Preparedness implications

These trends reinforce the case for private continuity infrastructure: secure shelter, independent utilities, protected communications, stored essentials, and a family operating plan that assumes digital services may be unreliable, manipulated, or selectively restricted. Preparedness is no longer only about weather, war, or civil unrest. It is also about preserving autonomy in a world increasingly shaped by opaque systems.

Autonomy

Autonomous systems raise the stakes

Autonomous and semi-autonomous systems are moving into transportation, industrial operations, perimeter security, drones, robotics, and battlefield support. The central issue is not whether machines can assist humans. It is whether humans remain meaningfully in control when speed, complexity, and incentives push decisions toward automation.

Civilian systems

Autonomous vehicles, robotic warehouses, and AI-directed industrial tools can improve throughput while introducing new failure modes, liability disputes, and cascading accidents when sensors, models, or command layers fail.

Strategic systems

When AI is connected to targeting, threat detection, missile defense, drone swarms, or battlefield command software, compressed decision cycles can increase the risk of miscalculation, accidental escalation, or rapid conflict expansion.

Weapons

AI and weapons systems

One of the most serious long-term concerns is the integration of artificial intelligence into weapons systems, targeting pipelines, intelligence fusion, and command support. Even when humans remain formally in the loop, the practical reality may be different. If commanders are given machine-generated recommendations under severe time pressure, the machine can become the de facto decision-maker.

AI-enabled targeting can increase speed and scale, but it can also magnify error. Misidentified objects, spoofed signals, corrupted data, adversarial deception, or overconfident models can produce lethal outcomes before human review catches up. In a contested environment, each side may feel pressure to automate further simply to avoid falling behind. That creates an arms race in decision speed, not just firepower.

For civilians, the implication is clear: strategic instability can rise even without a deliberate plan for total war. Faster systems can shorten warning times, increase the chance of misinterpretation, and make geopolitical crises more volatile. Families who invest in secured underground living are not betting on one scenario. They are building optionality against a world in which technological acceleration makes crisis management harder, not easier.

Consequences

What families should plan for

Income disruption

Assume career volatility, contract compression, and abrupt changes in professional demand.

Payment friction

Maintain redundancy for periods when digital finance, verification, or banking access becomes unstable.

Information distrust

Develop private verification habits and trusted communication channels for crisis conditions.

Utility interruptions

Plan for power, water, filtration, storage, and communications that do not depend on fragile public systems.

Privacy erosion

Reduce unnecessary exposure of location, assets, routines, and family data across platforms.

Faster escalation

Prepare for crises that move from rumor to disruption before institutions can stabilize the narrative.